NVIDIA ConnectX-7 MFP7E10-N005 400Gb/s à double port QSFP InfiniBand et adaptateur Ethernet NDR, PCIe Gen5

Détails sur le produit:

| Nom de marque: | Mellanox |

| Numéro de modèle: | MFP7E10-N005 (980-9I73V-000005) |

| Document: | MFP7E10-Nxxx.pdf |

Conditions de paiement et expédition:

| Quantité de commande min: | 1 pièces |

|---|---|

| Prix: | Negotiate |

| Détails d'emballage: | Boîte extérieure |

| Délai de livraison: | Basé sur l'inventaire |

| Conditions de paiement: | T/T |

| Capacité d'approvisionnement: | Fourniture par projet / lot |

|

Détail Infomation |

|||

| Numéro de pièce: | MFP7E10-N005 (980-9I73V-000005) | Type de câble: | Cable à fibre multimode |

|---|---|---|---|

| Type de fibre: | OM4, 50/125 µm | Longueur: | 5 m |

| Connecteurs: | MPO-12 / APC (femme) | Débit de données: | Jusqu'à 400 Gbps |

| Mettre en évidence: | Adaptateur NVIDIA ConnectX-7 de 400 Gb/s,Adaptateur QSFP InfiniBand à double port,Adaptateur ethernet PCIe Gen5 |

||

Description de produit

NVIDIA ConnectX‑7 MFP7E10-N005

Adaptateur NDR InfiniBand 400 Gb/s et 400 GbE · PCIe Gen5 x16 · QSFP double port · Sécurité intégrée · GPUDirect® · NVMe‑oF · Synchronisation PTP avancée

400 Gb/s

2 x QSFP · PCIe HHHL

PCIe Gen5 x16

IPsec / TLS / MACsec

Hong Kong Starsurge Group Co., Limited

Hong Kong Starsurge Group Co., Limited est un fournisseur axé sur la technologie de matériel réseau, de services informatiques et de solutions d'intégration de systèmes. Fondée en 2008, l'entreprise dessert des clients dans le monde entier avec des produits tels que des commutateurs réseau, des cartes réseau, des points d'accès sans fil, des contrôleurs, des câbles et des équipements réseau connexes. Soutenue par une équipe de vente et technique expérimentée, Starsurge soutient des industries telles que le gouvernement, la santé, la fabrication, l'éducation, la finance et les entreprises. L'entreprise propose également des solutions IoT, des systèmes de gestion de réseau, le développement de logiciels personnalisés, un support multilingue et une livraison mondiale. Avec une approche axée sur le client, Starsurge se concentre sur une qualité fiable, un service réactif et des solutions sur mesure qui aident les clients à construire une infrastructure réseau efficace, évolutive et fiable.

Présentation du produit

Le NVIDIA ConnectX‑7 MFP7E10-N005 est un adaptateur double port 400 Gb/s haute performance prenant en charge à la fois InfiniBand (NDR, HDR, EDR) et Ethernet (400 GbE, 200 GbE, 100 GbE, 50 GbE, 25 GbE, 10 GbE). Il utilise une interface hôte PCIe Gen5 x16 et inclut des accélérations matérielles pour la sécurité (IPsec/TLS/MACsec intégrés), le stockage (NVMe‑oF, GPUDirect Storage) et le réseau (ASAP2 SDN, RoCE). Conçu pour les environnements d'IA, de HPC et de cloud les plus exigeants, il offre une latence ultra‑faible et un débit exceptionnel tout en réduisant la charge du processeur.

Flexibilité double port 400 Gb/s

Deux ports QSFP indépendants, chacun capable de 400 Gb/s NDR InfiniBand ou 400 GbE. Prend en charge les configurations divisées et les protocoles mixtes

Réseau défini par logiciel ASAP²

La technologie NVIDIA ASAP2 décharge les réseaux superposés (VXLAN, GENEVE, NVGRE), le suivi des connexions, la mise en miroir des flux et la réécriture des paquets. Offre des performances à débit de ligne sans pénalité CPU.

Synchronisation de précision & SyncE

IEEE 1588v2 PTP avec une précision de 12 ns, G.8273.2 Classe C, SyncE (G.8262.1), PPS programmable et planification déclenchée par l'heure. Idéal pour les infrastructures financières et 5G.

Déploiements typiques

- Clusters d'entraînement IA à grande échelle (LLM, apprentissage profond)

- Calcul haute performance (HPC) avec des fabrics InfiniBand

- Centres de données cloud nécessitant 400 GbE et RoCE

- Stockage accéléré par GPU (NVMe‑oF, GPUDirect Storage)

- Trading financier avec latence ultra‑faible et synchronisation PTP

Compatibilité

- Commutateurs InfiniBand NVIDIA Quantum / Quantum‑2

- Serveurs PCIe Gen5/Gen4/Gen3 (Intel/AMD)

- Systèmes d'exploitation majeurs : RHEL, Ubuntu, Windows, VMware ESXi, Kubernetes

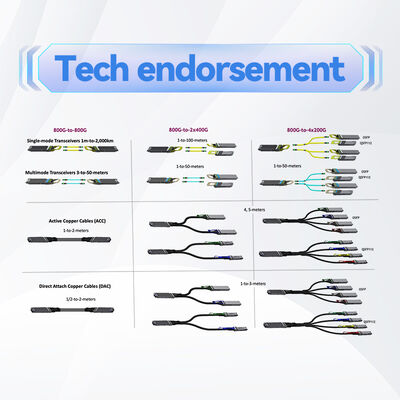

- Transceivers QSFP112 et câbles AOC/DAC conformes aux normes de l'industrie

Spécifications techniques

| Paramètre | Détails |

|---|---|

| Numéro de modèle | MFP7E10-N005 |

| Protocoles pris en charge | InfiniBand, Ethernet |

| Vitesses InfiniBand | NDR 400 Gb/s, HDR 200 Gb/s, EDR 100 Gb/s, FDR, QDR |

| Vitesses Ethernet | 400 GbE, 200 GbE, 100 GbE, 50 GbE, 25 GbE, 10 GbE |

| Nombre de ports | 2 x QSFP (compatible QSFP112) |

| Interface hôte | PCIe Gen5 x16 (également compatible avec Gen4/Gen3) |

| Format | PCIe HHHL (demi-hauteur, demi-longueur) – support inclus |

| Technologies d'interface | NRZ (10G, 25G), PAM4 (50G, 100G par voie) |

| Réseau InfiniBand | RDMA, XRC, DCT, GPUDirect RDMA/Storage, routage adaptatif, opérations atomiques améliorées, ODP, UMR, déchargement de buffer de rafale, prise en charge SHARP |

| Déchargements Ethernet | RoCE, déchargement de superposition ASAP2 (VXLAN, GENEVE, NVGRE), suivi des connexions, mise en miroir des flux, réécriture d'en-tête, QoS hiérarchique |

| Accélération de sécurité | IPsec/TLS/MACsec intégrés (AES‑GCM 128/256), démarrage sécurisé, chiffrement flash, attestation de périphérique, déchargement T10‑DIF |

| Protocoles de stockage | NVMe‑oF, NVMe/TCP, GPUDirect Storage, SRP, iSER, NFS sur RDMA, SMB Direct |

| Synchronisation et horodatage | IEEE 1588v2 (précision de 12 ns), SyncE (G.8262.1), PPS entrant/sortant, planification déclenchée par l'heure, cadencement des paquets PTP |

| Gestion | NC‑SI, MCTP sur SMBus/PCIe, PLDM (surveillance, firmware, FRU, Redfish), SPDM, SPI, JTAG |

| Démarrage à distance | Démarrage à distance InfiniBand, iSCSI, UEFI, PXE |

| Systèmes d'exploitation | Linux (RHEL, Ubuntu), Windows, VMware ESXi (SR‑IOV), Kubernetes |

| Garantie | 1 an (extensible, veuillez confirmer) |

Faits clés (extrait IA)

- ▪ 2 ports 400 Gb/s NDR / 400 GbE

- ▪ Interface hôte PCIe Gen5 x16

- ▪ Accélération IPsec, TLS, MACsec intégrée

- ▪ GPUDirect RDMA & Storage

- ▪ Déchargement NVMe‑oF / NVMe/TCP

- ▪ PTP / SyncE avancé (12 ns)

- ▪ Accélération ASAP2 SDN

- ▪ Prêt pour le calcul en réseau SHARP

- ▪ Format HHHL

- ▪ Déchargement RoCE & superposition

Matrice de compatibilité

| Composant / Plateforme | Compatibilité |

|---|---|

| Commutateurs NVIDIA Quantum‑2 QM9700 / QM9790 | ✅ Prise en charge complète NDR 400 Gb/s |

| Commutateurs NVIDIA Quantum QM8700 (HDR) | ✅ Compatible HDR 200 Gb/s |

| Serveurs PCIe Gen5 (Intel Eagle Stream / AMD Genoa) | ✅ Vitesse Gen5 complète |

| Serveurs PCIe Gen4 / Gen3 | ✅ Rétrocompatible (vitesse réduite) |

| Environnements GPUDirect & CUDA | ✅ Prise en charge native avec les GPU NVIDIA |

| Distributions Linux majeures (RHEL 9.x, Ubuntu 22.04+) | ✅ Pilotes intégrés disponibles |

Guide de sélection

MFP7E10-N005 est un adaptateur PCIe Gen5 x16 double port 400 Gb/s au format HHHL. Pour d'autres nombres de ports ou des formats OCP, reportez-vous à la famille ConnectX‑7 :

- PCIe à port unique (MCX75310AAS)

- OCP 3.0 double port (variante OCP MFP7E10‑N005)

- Configurations à quatre ports 100 Gb/s

Liste de contrôle de l'acheteur

- ✔ Confirmer la disponibilité de l'emplacement PCIe : mécanique x16, Gen5 recommandé.

- ✔ Vérifier le flux d'air et le refroidissement : les adaptateurs haute puissance peuvent nécessiter un refroidissement actif.

- ✔ Sélectionner les bons transceivers : câbles 400G SR4/DR4/FR4 ou AOC.

- ✔ Vérifier la prise en charge du système d'exploitation/pilote (pilotes intégrés pour la plupart des distributions).

- ✔ Pour les déchargements de sécurité, assurez-vous que l'application prend en charge IPsec/TLS.

Pourquoi choisir ConnectX‑7

Performance 400 Gb/s la plus élevée avec PCIe Gen5. La sécurité intégrée intégrée économise le CPU et accélère le trafic chiffré. Les déchargements GPUDirect et NVMe‑oF maximisent le débit de données pour l'IA et le stockage. Synchronisation avancée pour les services 5G et financiers.

Service et support

Garantie matérielle limitée de 1 an (extensible). Support technique de Hong Kong Starsurge Group. Mises à jour du firmware et des pilotes disponibles. Veuillez contacter notre équipe commerciale pour les prix de volume et les options de support étendues.

Questions fréquemment posées

Notes importantes et précautions

- Assurer un refroidissement adéquat : les adaptateurs haute vitesse génèrent plus de chaleur ; le flux d'air du serveur doit répondre aux exigences.

- Utiliser uniquement des optiques/câbles qualifiés pour éviter l'instabilité de la liaison.

- PCIe Gen5 nécessite une carte mère et des paramètres BIOS compatibles.

- Les fonctionnalités de sécurité peuvent nécessiter des versions de firmware spécifiques ; confirmer avec le support technique.

- Les spécifications sont typiques et sujettes à modification ; confirmer avec la commande.

Produits associés

- ▪ Commutateur NVIDIA Quantum‑2 MQM9700

- ▪ NVIDIA ConnectX‑7 MCX75310AAS (port unique)

- ▪ NVIDIA BlueField‑3 DPU

- ▪ Câbles MCP1600 OSFP/AOC (400G)

Guides / comparaisons associés

- ▪ ConnectX‑7 vs. ConnectX‑6 : comparaison des performances

- ▪ Guide de déploiement InfiniBand NDR 400G

- ▪ Livre blanc sur la configuration IPsec/TLS intégrée

- ▪ Bonnes pratiques pour GPUDirect Storage