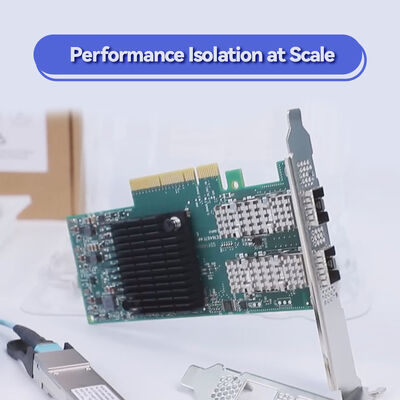

NVIDIA ConnectX-6 MCX653105A-HDAT Adaptateur intelligent InfiniBand à port unique de 200 Gb / s avec chiffrement matériel et PCIe 4.0

Détails sur le produit:

| Nom de marque: | Mellanox |

| Numéro de modèle: | MCX653105A-HDAT |

| Document: | connectx-6-infiniband.pdf |

Conditions de paiement et expédition:

| Quantité de commande min: | 1 pièces |

|---|---|

| Prix: | Negotiate |

| Détails d'emballage: | Boîte extérieure |

| Délai de livraison: | Basé sur l'inventaire |

| Conditions de paiement: | T/T |

| Capacité d'approvisionnement: | Fourniture par projet / lot |

|

Détail Infomation |

|||

| Statut des produits: | Action | Application: | Serveur |

|---|---|---|---|

| Condition: | Nouveau et original | Taper: | Filaire |

| Vitesse maximale: | Jusqu'à 200 Go / s | Connecteur Ethernet: | QSFP56 |

| Modèle: | MCX653105A-HDAT | ||

| Mettre en évidence: | NVIDIA ConnectX-6 adaptateur Infiniband,Carte réseau PCIe 4.0 de 200 Gb/s,Adaptateur InfiniBand avec chiffrement matériel |

||

Description de produit

Adaptateur intelligent monoport HDR 200 Gb/s avec calcul en réseau et chiffrement matériel

Le NVIDIA ConnectX-6 MCX653105A-HDAT offre un débit complet de 200 Gb/s sur un seul port QSFP56, combinant une latence ultra-faible, des délestages matériels et un chiffrement XTS-AES au niveau du bloc. Conçu pour les clusters HPC, IA et le stockage NVMe-oF, cet adaptateur PCIe 4.0 x16 décharge les opérations collectives, RDMA et le chiffrement du CPU, maximisant les performances des applications et la scalabilité dans les environnements de centres de données exigeants.

Le MCX653105A-HDAT appartient à la famille d'adaptateurs InfiniBand NVIDIA ConnectX-6, conçue pour des performances extrêmes dans les centres de données modernes. Cette carte QSFP56 monoport prend en charge jusqu'à 200 Gb/s (HDR InfiniBand ou 200 GbE) avec une accélération matérielle complète pour RDMA, le transport fiable et le calcul en réseau. En intégrant les délestages d'opérations collectives, la mise en correspondance des balises MPI et l'accélération NVMe over Fabrics, l'adaptateur réduit considérablement la charge du CPU tout en améliorant l'efficacité du réseau. Son chiffrement intégré au niveau du bloc AES-XTS garantit la sécurité des données sans pénalité de performance, ce qui le rend idéal pour les services financiers, la recherche gouvernementale et les déploiements cloud hyperscale.

Jusqu'à 200 Gb/s (HDR InfiniBand / 200 GbE) sur QSFP56 unique

Jusqu'à 215 millions de messages/sec

XTS-AES 256/512 bits au niveau du bloc, conforme FIPS

Délestages collectifs, délestages cible/initiateur NVMe-oF, tampon de rafale

PCIe Gen 4.0 / 3.0 x16 (rétrocompatible)

SR-IOV (1K VFs), ASAP2, délestage Open vSwitch, tunnels de superposition

RoCE, XRC, DCT, pagination à la demande, prise en charge GPUDirect RDMA

PCIe bas profil autonome, support haut préinstallé + support court inclus

NVIDIA ConnectX-6 intègre des moteurs d'accélération de calcul en réseau qui déchargent les opérations critiques du centre de données du CPU hôte. Le MCX653105A-HDAT prend en charge le transport fiable basé sur le matériel, le routage adaptatif et le contrôle de congestion, garantissant des performances prévisibles dans les réseaux à grande échelle. L'accès direct à la mémoire à distance (RDMA) permet des transferts de données sans copie, en contournant le noyau du système d'exploitation. Avec NVIDIA GPUDirect RDMA, la mémoire GPU communique directement avec l'adaptateur réseau, réduisant la latence pour l'entraînement IA et les simulations HPC. Le chiffrement XTS-AES au niveau du bloc intégré (clé 256/512 bits) garantit la sécurité des données en transit et au repos sans charge CPU, et l'adaptateur est conçu pour répondre aux exigences de conformité FIPS 140-2.

- Calcul haute performance (HPC) : Simulations à grande échelle, prévisions météorologiques et dynamique des fluides computationnelle nécessitant une interconnexion à faible latence de 200 Gb/s.

- Clusters IA et apprentissage profond : Entraînement distribué avec GPUDirect RDMA, maximisant le débit entre les nœuds GPU.

- Systèmes de stockage NVMe-oF : Stockage désagrégé haute performance avec délestages complets cible/initiateur, réduisant l'utilisation du CPU.

- Centres de données hyperscale et cloud : Environnements virtualisés avec SR-IOV, réseaux de superposition et chiffrement accéléré par matériel.

- Plateformes de trading financier : Réseau déterministe à latence ultra-faible pour le trading algorithmique.

Le ConnectX-6 MCX653105A-HDAT interopère de manière transparente avec les commutateurs InfiniBand NVIDIA Quantum (HDR 200 Gb/s), les commutateurs 200 GbE standard et une large gamme de plateformes serveur. Il prend en charge les principaux systèmes d'exploitation et piles de virtualisation, garantissant une intégration flexible dans l'infrastructure existante.

| Paramètre | Spécification |

|---|---|

| Modèle de produit | MCX653105A-HDAT |

| Débit de données | 200 Gb/s, 100 Gb/s, 50 Gb/s, 40 Gb/s, 25 Gb/s, 10 Gb/s, 1 Gb/s (InfiniBand et Ethernet) |

| Ports et connecteur | 1x QSFP56 (prend en charge les câbles en cuivre passifs, optiques actifs et AOC) |

| Interface hôte | PCIe Gen 4.0 x16 (également compatible avec Gen 3.0, 2.0 ; prend en charge les configurations x8, x4, x2, x1) |

| Latence | Inframicroseconde (typique <0.7µs) |

| Taux de messages | Jusqu'à 215 millions de messages par seconde |

| Chiffrement | Délestage matériel XTS-AES 256/512 bits, prêt pour FIPS 140-2 |

| Facteur de forme | PCIe bas profil autonome (support haut préinstallé, accessoire de support court inclus) |

| Dimensions (sans support) | 167,65 mm x 68,90 mm |

| Consommation électrique | Typique 22W – 24W (dépend de l'utilisation du lien) |

| Virtualisation | SR-IOV (jusqu'à 1K fonctions virtuelles), VMware NetQueue, NPAR, délestage de flux ASAP2 |

| Gestion et surveillance | NC-SI, MCTP sur PCIe/SMBus, PLDM (DSP0248, DSP0267), I2C, flash SPI |

| Démarrage à distance | InfiniBand, iSCSI, PXE, UEFI |

| Systèmes d'exploitation | RHEL, SLES, Ubuntu, Windows Server, FreeBSD, VMware vSphere, OpenFabrics Enterprise Distribution (OFED), WinOF-2 |

| Numéro de pièce de commande (OPN) | Ports | Vitesse max. | Interface hôte | Caractéristiques principales |

|---|---|---|---|---|

| MCX653105A-HDAT | 1x QSFP56 | 200 Gb/s | PCIe 3.0/4.0 x16 | Monoport, chiffrement matériel, délestages complets ConnectX-6, idéal pour les serveurs haute densité |

| MCX653106A-HDAT | 2x QSFP56 | 200 Gb/s (double port) | PCIe 3.0/4.0 x16 | Double port 200 Gb/s avec chiffrement, densité de bande passante maximale |

| MCX653105A-ECAT | 1x QSFP56 | 100 Gb/s | PCIe 3.0/4.0 x16 | Monoport 100 Gb/s, optimisé en coût pour les exigences de vitesse inférieures |

| MCX653106A-ECAT | 2x QSFP56 | 100 Gb/s (double port) | PCIe 3.0/4.0 x16 | Double port 100 Gb/s, délestages de virtualisation et de stockage |

| MCX653436A-HDAT (OCP 3.0) | 2x QSFP56 | 200 Gb/s | PCIe 3.0/4.0 x16 | OCP 3.0 petit facteur de forme, double port 200 Gb/s |

- Bande passante complète de 200 Gb/s : La conception monoport offre un débit maximal pour les nœuds de calcul où la haute densité par port est prioritaire.

- Sécurité matérielle intégrée : Chiffrement par bloc XTS-AES sans charge CPU, conforme FIPS pour les industries réglementées.

- Stockage et IA accélérés : Les délestages NVMe-oF et GPUDirect RDMA augmentent considérablement les performances pour l'entraînement IA et le stockage défini par logiciel.

- PCIe 4.0 prêt pour l'avenir : Double la bande passante de l'interconnexion vers l'hôte, éliminant les goulots d'étranglement pour le réseau 200 Gb/s.

- Gestion simplifiée : La pile de pilotes unifiée (OFED, WinOF-2) et la large compatibilité OS réduisent la complexité du déploiement.

Hong Kong Starsurge Group fournit une assistance technique experte, une couverture de garantie et des services RMA mondiaux pour tous les adaptateurs NVIDIA ConnectX. Nos spécialistes réseau assistent à l'installation des pilotes, à l'optimisation des performances et à l'intégration du réseau. Nous offrons des prix flexibles, des devis groupés pour les projets de centres de données et une expédition rapide dans le monde entier. Pour des solutions personnalisées, contactez notre équipe commerciale pour discuter des délais et des remises sur volume.

• Vérifiez que le slot PCIe fournit suffisamment d'alimentation (75 W du slot ; l'adaptateur consomme environ 22-24 W en typique).

• Pour les plateformes refroidies par liquide, cette carte standard refroidie par air n'est pas compatible avec les variantes de plaque froide ; contactez Starsurge pour les besoins en SKU refroidis par liquide.

• Utilisez toujours des câbles ou des modules certifiés QSFP56 pour atteindre les performances de 200 Gb/s.

• Confirmez la compatibilité de la version du pilote avec votre système d'exploitation et votre noyau avant le déploiement.

Depuis 2008, Hong Kong Starsurge Group Co., Limited est un fournisseur de confiance de matériel réseau d'entreprise, d'intégration de systèmes et de services informatiques. En tant que partenaire agréé pour les solutions réseau NVIDIA, Starsurge fournit de véritables adaptateurs, commutateurs et câbles ConnectX aux clients gouvernementaux, financiers, de santé, d'éducation et hyperscale du monde entier. Nos équipes commerciales et techniques expérimentées assurent un déploiement fluide, de l'architecture avant-vente au support après-vente, avec un engagement envers une qualité fiable et un service réactif.

Livraison mondiale · Support multilingue · Services OEM et d'intégration sur mesure

| Composant / Écosystème | Statut de prise en charge | Remarques |

|---|---|---|

| Commutateurs InfiniBand NVIDIA Quantum HDR | ✓ Entièrement pris en charge | Réseau 200 Gb/s, routage adaptatif |

| Commutateurs 200 GbE (IEEE 802.3) | ✓ Compatible | Nécessite des modes FEC selon les spécifications du commutateur |

| GPU Direct RDMA | ✓ Oui | Séries de GPU NVIDIA (Volta, Ampere, Hopper, etc.) |

| VMware vSphere 7.0/8.0 | ✓ Certifié | Pilotes natifs, prise en charge SR-IOV |

| Linux (RHEL, Ubuntu, SLES) | ✓ Prise en charge complète | MLNX_OFED, pilotes intégrés disponibles |

| Windows Server 2019/2022 | ✓ Pris en charge | Package de pilotes WinOF-2 |

- [ ] Confirmer la vitesse de liaison requise : le monoport 200 Gb/s correspond aux exigences de bande passante de votre nœud.

- [ ] Vérifier le slot PCIe du serveur : slot physique x16, Gen 4 recommandé pour des performances complètes de 200 Gb/s.

- [ ] Sélectionner les câbles ou émetteurs-récepteurs QSFP56 appropriés (cuivre passif jusqu'à 5 m, AOC ou optiques).

- [ ] Vérifier la prise en charge des pilotes OS (version OFED ou intégrée).

- [ ] S'assurer que les exigences de conformité du chiffrement sont satisfaites (XTS-AES, FIPS).

- [ ] Évaluer le refroidissement environnemental : les adaptateurs haute vitesse peuvent nécessiter un flux d'air dirigé.